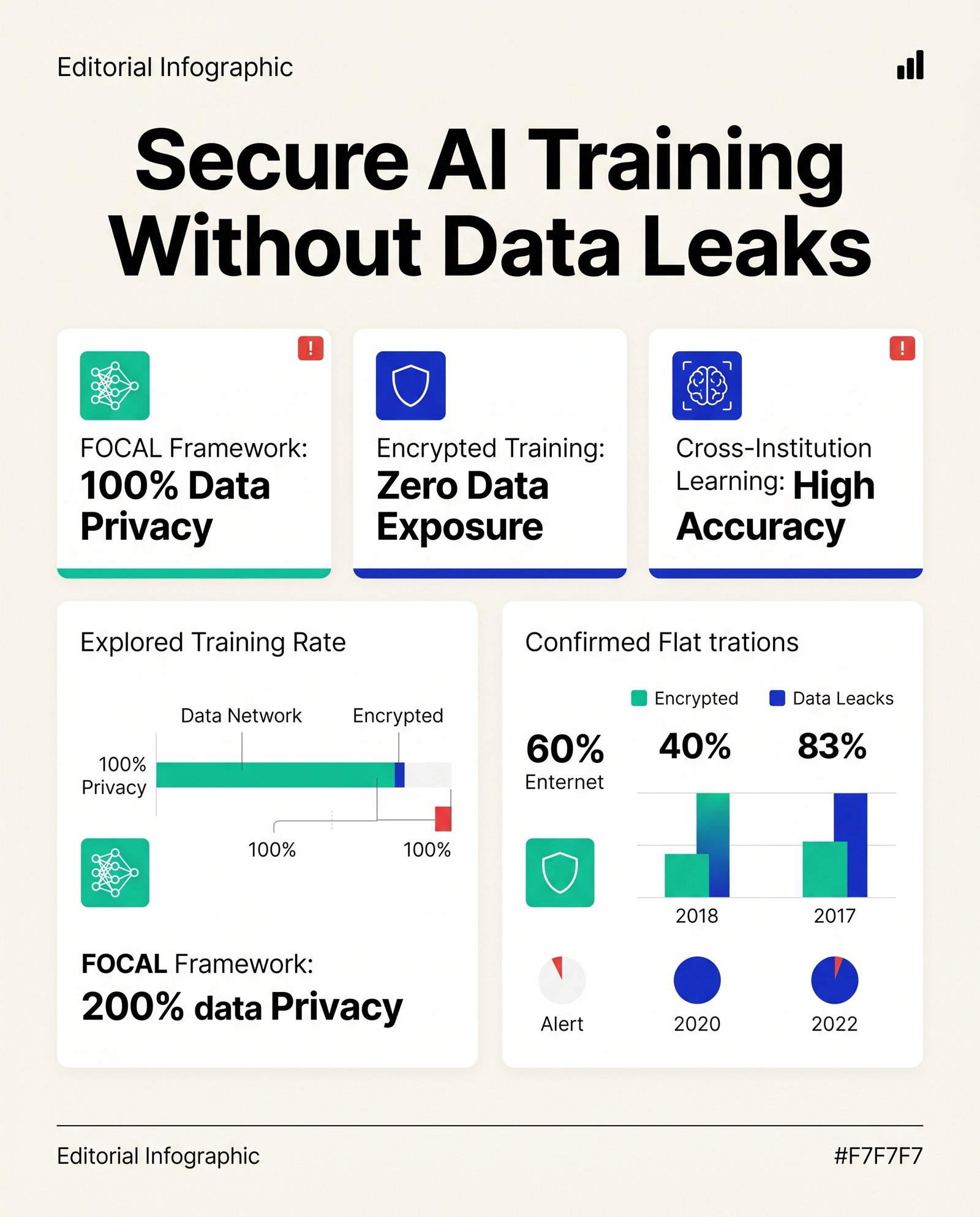

Encrypted AI trains on medical data without leaks

A new encryption framework lets hospitals train medical foundation models together without ever sharing raw patient data or risking privacy leaks.

Discover the newest research about AI innovations in 🧠 LLM’s.

A new encryption framework lets hospitals train medical foundation models together without ever sharing raw patient data or risking privacy leaks.

Generative AI in head and neck cancer care: promising assistive tool, not ready for autonomous decisions. 🤖💉

Evaluating ChatGPT-5 & Grok-4 in sleep medicine: 92.4% diagnostic accuracy, but limited differential diagnosis performance. 💤📊

AI-generated hospital summaries show 57% usage, minimal harm risk, and reduced physician burnout. 📉🤖

Evaluating AI in oral cancer communication: Gemini and Grok show 90%+ referral safety but variable risks. 📊🦷

Global sentiment on health AI shows 65.26% positivity, with privacy concerns at 33.31%. 🌍🤖📊

Evaluating LLMs for orthodontic consultations: Grok-3 excels in reliability, while DeepSeek-V3 leads in readability. 📊🦷

AI-enhanced consent forms improved readability from 14.1 to 8.8 grade levels, but content fidelity decreased significantly. 📉📄

Hyper-RAG enhances LLM accuracy by 12.3%, reducing hallucinations in medical applications. 🚀📊

Study shows radiologists struggle to differentiate AI-generated X-rays from real ones, raising concerns for medical image integrity. 🩻⚠️