⚡ Quick Summary

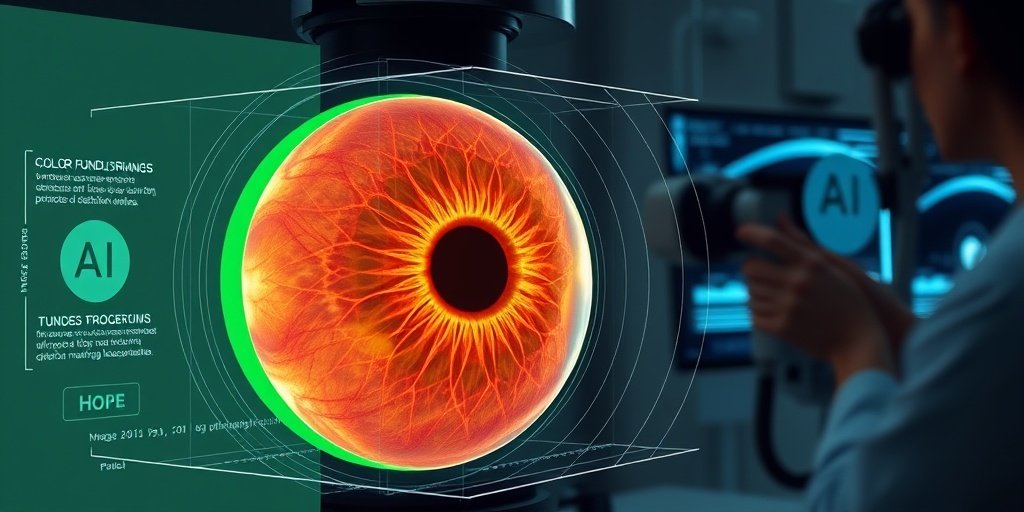

The study introduces MaxGRNet, a novel multi-axis vision transformer designed for eye disease classification using color fundus images. This framework demonstrates superior performance over traditional models, achieving a macro-averaged test accuracy of 96.75% and enhancing model transparency through Explainable AI (XAI) techniques.

🔍 Key Details

- 📊 Dataset: Publicly available eye disease classification dataset

- 🧩 Features used: Color fundus images

- ⚙️ Technology: MaxGRNet (multi-axis vision transformer)

- 🏆 Performance: Accuracy: 96.75%, Precision: 96.70%, Recall: 96.80%

- 🔍 Methodology: Five-fold cross-validation for robustness assessment

🔑 Key Takeaways

- 👁️ Eye diseases like diabetic retinopathy, glaucoma, and cataracts pose significant health risks.

- 💡 MaxGRNet integrates transformer-based attention mechanisms with Global Response Normalization (GRN).

- 📈 Outperformed conventional CNNs and Vision Transformer variants in classification tasks.

- 🔬 XAI techniques provide insights into the model’s decision-making process, enhancing interpretability.

- 🛠️ Rigorous preprocessing techniques were employed to ensure data consistency.

- 🌟 Statistically significant improvements confirmed through paired statistical t-tests.

- 🌍 Potential applications in automated fundus image classification for ophthalmic research.

📚 Background

Eye diseases are a major global health concern, often leading to severe visual impairment or blindness if not diagnosed early. Traditional methods of classification have relied heavily on Convolutional Neural Networks (CNNs), which, while effective, may lack the interpretability needed for clinical applications. The advent of Explainable AI (XAI) offers a promising avenue for enhancing model transparency and understanding in medical imaging.

🗒️ Study

The study aimed to develop a robust framework for eye disease classification using a multi-axis vision transformer, MaxGRNet. Researchers applied this architecture to color fundus images, leveraging advanced attention mechanisms to capture complex spatial and contextual relationships. The model was rigorously evaluated using a five-fold cross-validation strategy to ensure its generalization capabilities.

📈 Results

The results were impressive, with MaxGRNet achieving a macro-averaged test accuracy of 96.75%, precision of 96.70%, and recall of 96.80%. These metrics indicate a high level of performance, significantly surpassing that of conventional CNNs and other Vision Transformer variants. Statistical analyses confirmed that these improvements were not only substantial but also statistically significant.

🌍 Impact and Implications

The implications of this study are profound. By utilizing advanced transformer architectures, the proposed framework could revolutionize the way eye diseases are classified and diagnosed. The integration of XAI techniques not only enhances model transparency but also supports clinical decision-making, paving the way for more effective and reliable ophthalmic applications.

🔮 Conclusion

This study highlights the remarkable potential of MaxGRNet in the realm of eye disease classification. With its high accuracy and interpretability, this framework stands as a testament to the future of AI in healthcare. Continued research and development in this area could lead to significant advancements in automated diagnostic tools, ultimately improving patient outcomes in ophthalmology.

💬 Your comments

What are your thoughts on the advancements in AI for eye disease classification? We invite you to share your insights and engage in a discussion! 💬 Leave your comments below or connect with us on social media:

MaxGRNet: A multi-axis vision transformer with improved generalization for eye disease classification using explainable AI with insertion-deletion operations on fundus images.

Abstract

Eye diseases, including diabetic retinopathy (DR), glaucoma, and cataracts, represent a major global health concern and can lead to severe visual impairment or blindness if not identified in a timely manner. This study proposes a novel eye disease classification framework based on a multi-axis vision transformer (MaxViT) applied to color fundus images with Explainable Artificial Intelligence (XAI) techniques to enhance model transparency. The proposed architecture integrates transformer-based attention mechanisms with Global Response Normalization (GRN)-based multi-layer perceptron (MLP) layers to capture complex spatial and contextual relationships within fundus images effectively. The model was evaluated on a publicly available eye disease classification dataset using a five-fold cross-validation strategy to assess its robustness and generalization. The experimental results show that the proposed approach consistently outperforms conventional Convolutional Neural Networks (CNNs) and Vision Transformer (ViT) variants, including ResNet50, Swin-T, MaxViT-T, and ViT-B16. The model achieved a macro-averaged test accuracy, precision, and recall values of 96.75%, 96.70%, and 96.80%, respectively, with paired statistical t-tests confirming that these improvements were statistically significant. Rigorous preprocessing techniques were employed to improve data consistency, and XAI-based visual explanations provided insights into the model’s decision-making process, supporting interpretability in ophthalmic image analysis. Overall, the proposed MaxViT-based framework is robust and computationally feasible for research-oriented evaluation approaches for automated fundus image classification, highlighting the potential of advanced transformer architectures for future decision-support and research-oriented ophthalmic applications.

Author: [‘Santo MMH’, ‘Bhoyan FH’, ‘Farhad FIJ’, ‘Farid FA’, ‘Chakraborty S’, ‘Mehedi MHK’, ‘Uddin J’, ‘Karim HBA’]

Journal: PLoS One

Citation: Santo MMH, et al. MaxGRNet: A multi-axis vision transformer with improved generalization for eye disease classification using explainable AI with insertion-deletion operations on fundus images. MaxGRNet: A multi-axis vision transformer with improved generalization for eye disease classification using explainable AI with insertion-deletion operations on fundus images. 2026; 21:e0346329. doi: 10.1371/journal.pone.0346329